Positioning AI

It’s sometimes useful to describe where things are positioned as a way to think about them. When I was part of the Universal Credit team that was designing tools for face-to-face appointments in job centres, we created screens that could be seen by both staff and the public — a shared space that sat alongside the conversation, rather than being owned by one or the other.

To take a more common example, a web browser is something local to a user, used to talk to and interpret something remote. A browser belongs to a user and can be customised to their needs.

The idea of positioning feels useful as a way to cut through or categorise general excitement that “AI can do X”. The answer should be “maybe, but how and where?”, but it often comes as a bundle of assumptions.

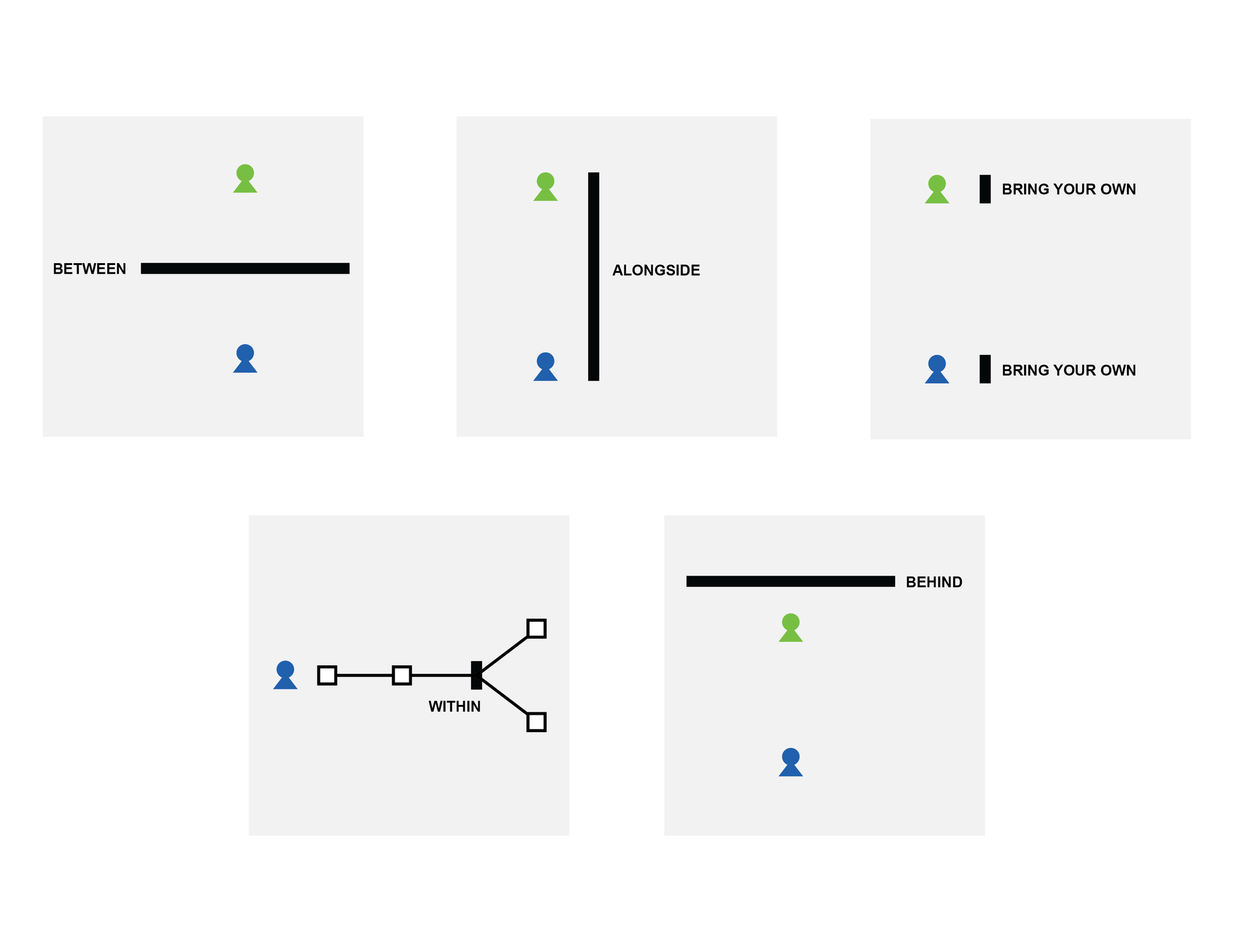

Below are some examples of positioning AI in different places in a user’s interaction with a service.

For simplicity, let’s assume we have two users: a customer using the service and a member of staff who operates it.

Rather than something consequential like welfare, social work, therapy, or 999 call centres, let’s assume our service is an AI-enabled kitchen design service. And let’s assume that when we talk about AI here we mean a mix of technologies (transcription, image generation, text generation, agency).

If some of the examples below seem ridiculous and have you thinking “that’s not how I want to order my kitchen!”, then that hopefully helps with thinking about more consequential uses too.

Between

Here, the AI sits between the customer and the staff member, who has little or no direct contact with the customer. The customer describes the kitchen they want via a chat interface, which builds and updates a shopping basket. There is a route to escalate to a human if the customer gets stuck.

This is broadly similar to a customer service chatbot.

Alongside

Here, the customer and staff member collaborate around a shared, AI-generated design. The AI augments a live conversation rather than replacing it.

Bring your own (customer side)

Here, the customer uses their own AI assistant on a personal device. They describe the kitchen they want, and the AI submits the order directly on the company’s website, including handling payment.

This is similar to a web browser autofilling forms, or a patient bringing their own device into a consultation to prompt questions and capture information.

Bring your own (staff side)

Here, the customer speaks directly to the staff member, but the staff member is supported by an AI assistant. It suggests the next best questions to ask and highlights gaps or inconsistencies in what the customer has said.

Within

Here, AI is embedded within a back-office workflow. For example, it might detect common kitchen layout issues and flag them for review.

Behind

Here, the AI operates in the background, passively observing a conversation about the customer’s requirements. At the end of the interaction, it summarises the discussion and drafts an order in the order management system. The staff member reviews and confirms it, and the customer receives a summary by email.

This is similar to transcription and summarisation tools used in areas like social work.

In conclusion - visibility, relations and control

The reason I think this helps is it starts to shift us aware from seeking 'use-cases', and start to ask about the qualities of the experience we might want to design. Does it matter how visible are the people and organsiations are vs the technology? Does it improve a valuable relationship, diminish it, or was the relationship inconsequential in the first place? Does it give users meaning control. or is it removing unnecessary control and admin burdens?